CHANGELOG

The record of a course evolving

This is a living document, in two senses. First, these lessons were decidedly imperfect when released, and though the generous feedback—and pushback—of the community we are continually improving our own understanding of the domain and modifying the course accordingly. Second, the area of LLMs and AI more broadly is evolving as rapidly as any in the history of technology. Examples that are relevant today quickly will become outdated. Assertions we have made may turn out to be false in a few years or even a few months. Even some of our core principle, while chosen to capture truths we will believe will stand the test of time, may turn out to be misguided.

In the interest of transparency, but even more importantly in the interest of illustrating our own fallibility and the difficulty of forecasting the future of AI technologies, we here enumerate the substantive changes that we have made to this course since launch. We will not detail the trivial fixes: typo corrections and and layout changes, for example. Nor will we list additions to the course that are simply involved in fleshing out the scaffold presented at first launch in February 2025: new videos added for each lesson, new exercises for the instructor guide. But we will list each place where we back off a claim, change our minds, or add new content based on new developments in the technology or the scientific understanding of this technology that have arisen subsequent to our initial launch.

CHANGES

April 18, 2026

Lesson 1

We explain that reinforcement learning with human feedback (RFHP) is an important stage in training large language models to be perform as chatbots.

A related issue: if LLMs were merely text prediction systems, they wouldn't be able to simulate conversation so effectively. There is a second essential step to training a large language model, known as reinforcement learning with human feedback (RLHF). This involves teaching the machines what humans want to hear from them.

Through this process, language models learn how to engage in dialogue. They learn what humans are looking for when they ask questions, and what constitutes an appropriate answer.

It's a useful trick, but it comes with costs. What humans want to hear, and what they need to hear — those are not always the same thing. Humans like being agreed with. They like being praised. They don't like being told they are wrong, let alone that they are foolish. And so when trained to give answers that people prefer, language models become obsequious, even sycophantic.

A large language model is likely to tell you "that's a great question," but good luck getting one to say "dude, I just explained that to you," "are you kidding me?", or "why would you even ask that?" And while receiving this kind of praise might feel good, it's not necessarily a good way to learn about the world.

March 18, 2026

Lesson 1

We didn't mean to give the impression that LLMs are good at the various tasks we illustrate in Lesson 1, only that they are capable of doing this wide variety of things. We've clarified.

We're not saying it does any of those things well—we'll explore that in detail as the course progresses. But we find it absolutely amazing that simply by predicting the next words in a stream of text, an single, general-purpose algorithm can do all of the things that ChatGPT does.

January 20, 2026

Lesson 13

We've added an example of one way that we use LLMs to help us with the "bullshit work" of navigating large, complex websites.

Indeed, we find LLMs helpful for navigating large, complex corporate or government websites. Indeed, we find LLMs helpful for navigating large, complex corporate or government websites.

- Where on [insurance company's] website can I file a claim for healthcare services rendered out-of-state?"

- Where do I go to appeal a parking ticket issued by the city of Philadelphia?

- How do I change my billing address with [large local utility]

This may be particularly useful when the websites have been deliberately engineered to include frictions—more on that in a moment.

October 17, 2025

Lesson 11

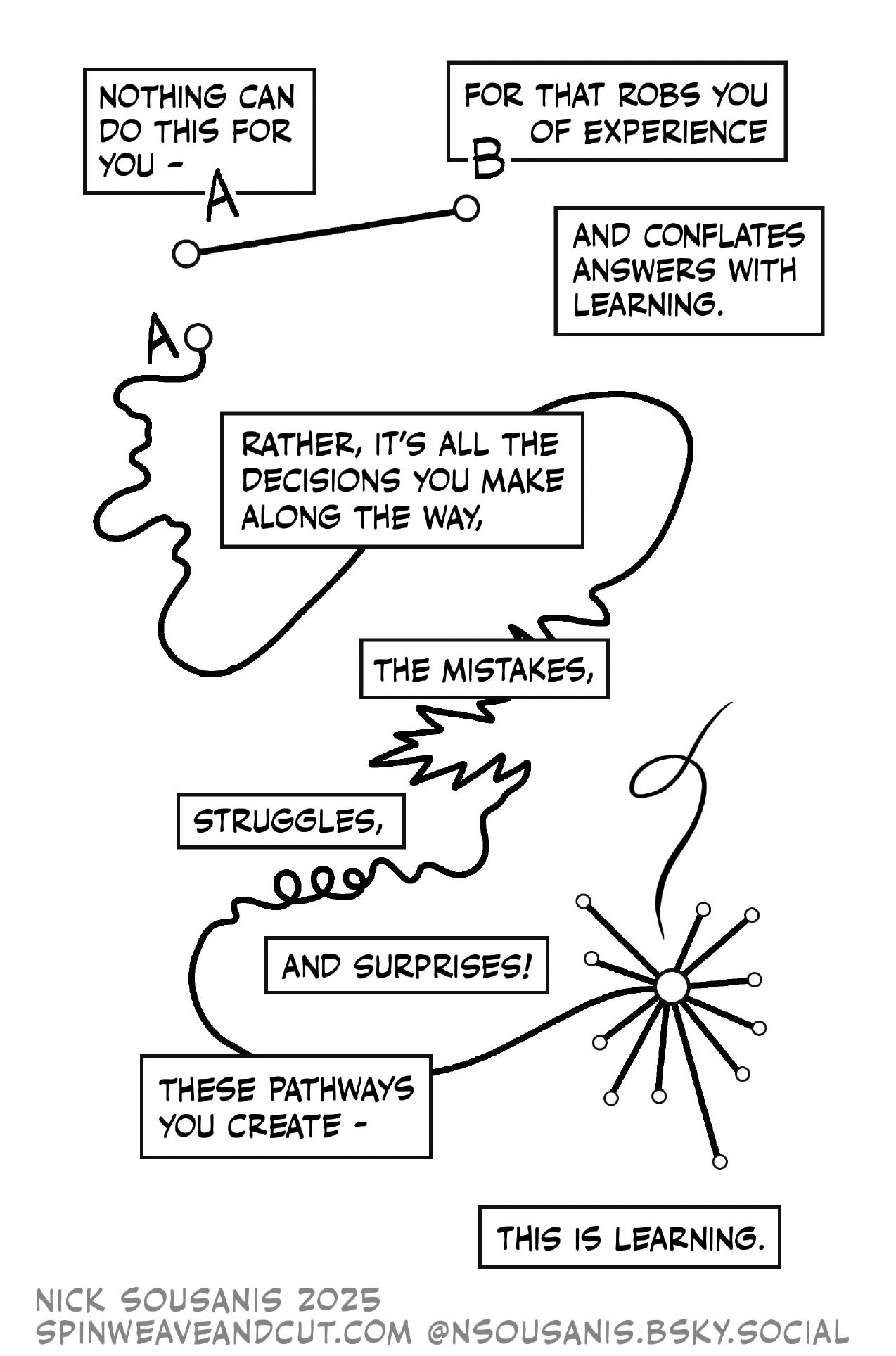

Graphic artist and professor Nick Sousanis summed up our sentiments about how LLMs interfere with learning far better than we ever could. We've included his graphic explanation and finessed the transitions on either side.

September 21, 2025

Lesson 3

Instructor Guide

In light of work from Jones and Bergen over the past two years, we think it's fair to say that LLMs can indeed pass the Turing Test at least in a limited 5-minute session setting with non-expert interrogators. We have updated the text of Lesson 3 accordingly.

Though the Jones and Bergen 2024 and 2025 Turing test prompts have similar performance in our experience, we have updated the Instructor Guide to use their more recent prompt.

Turing's test was designed to assess agents like Commander Data.But LLMs may beat Data to the prize. But LLMs got there first.

July 25, 2025

Lesson 6

We probably still need additional content on Chain of Thought prompting, but we have added a brief discussion of Anthropic's own admission that CoT summaries are not accurate representations of what the machine was actually doing.

Many newer LLM implementations, including ChatGPT o.3, Claude Sonnet 3.7, and DeepSeek R1 will "narrate" their activity as they develop an answer to a query.

"I'm thinking about...."

"I'm analyzing..."

"I'm considering..."

"I'm calculating..."

While these status messages give you something to look at as the machine is processing, these are not accurate descriptions of what the LLM is actually doing.

Given that these "Chain-of-thought summaries" are forms of communication are intended to impress or persuade rather than convey accurate information, they strike us classic examples of bullshit in the sense we discussed in Lesson 2.

July 11, 2025

Lesson 3

We added a brief discussion of a Journal of Marketing paper about how lower AI literacy drives greater AI receptivity. The key point is that what is attractive about LLMs is how they appear magical to those who do not understand how they work.

The more people buy into the illusion, the more receptive they are using LLMs. One study in the Journal of Marketing found that people who know less about AI are more likely to use it, not because they are less concerned about limitations or harms but rather because they "are more likely to perceive AI as magical and experience feelings of awe in the face of AI's execution of tasks that seem to require uniquely human attributes."

May 9, 2025

Lesson 18

In light of recent events, we have expanded on some of the propaganda / disinformation threats posed by LLMs.

As a result, commercial interests, not public ones, will shape how they are trained and how they are instructed to answer queries. If we treat LLMs as trusted sources of information in the same way that the Wikipedia is, we become vulnerable to the whims of a small number of powerful actors with interests in pushing fringe narratives. The "white genocide" meltdown of the Grok LLM offers a striking and frightening example. And if LLMs are trained indiscriminately on text from the internet, we also become vulnerable to "LLM grooming" campaigns of the sort already underway: disinformation efforts that aim to flood the training data for future LLMs with specific falsehoods.

April 26, 2025

Lesson 9

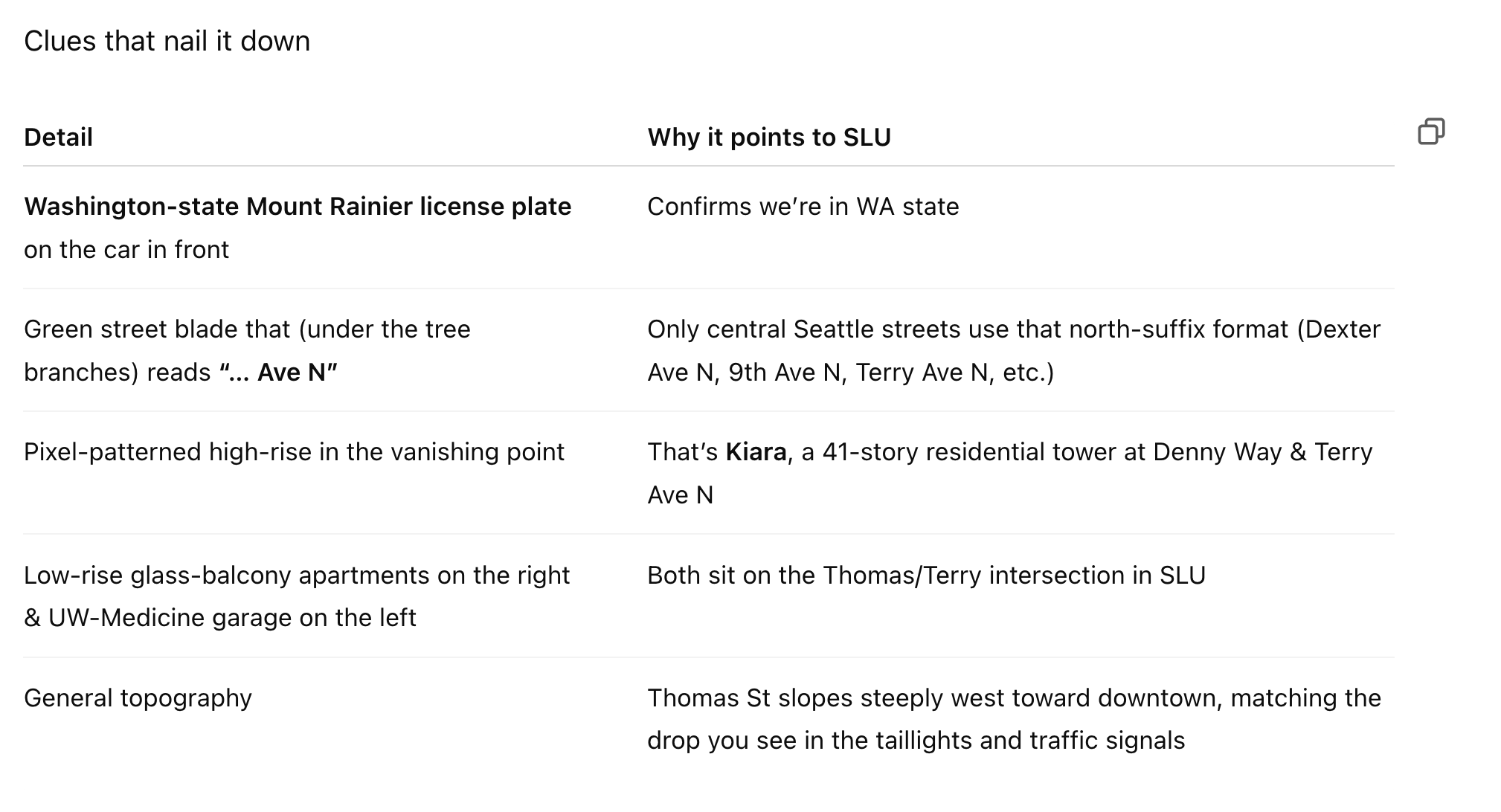

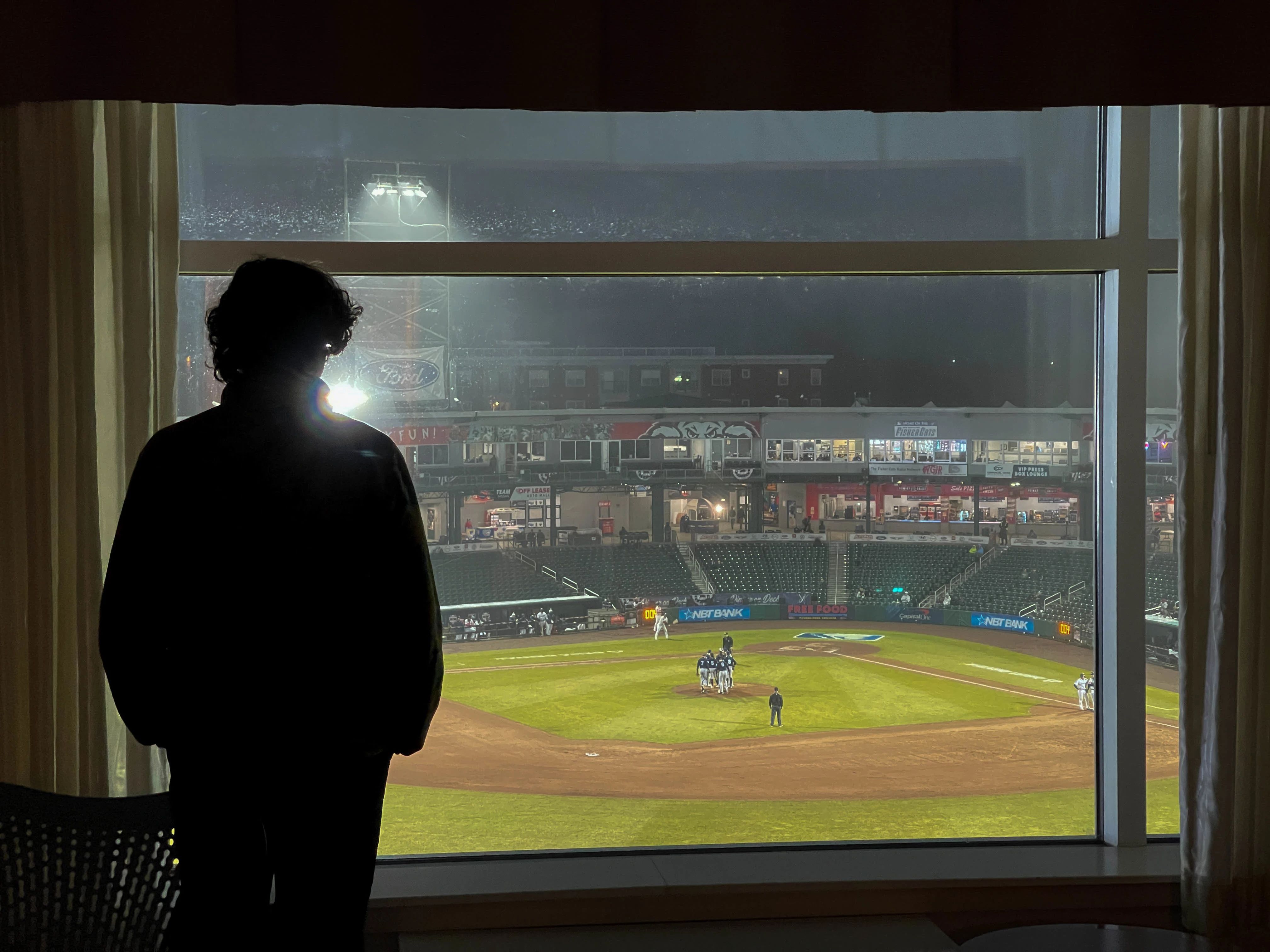

Today Simon Wilson published a blog post about how effective ChatGPT o.3 can be at identifying the location of a photograph. After playing with this for a good part of the the afternoon, we think it;'s one of the more impressive capacities we have seen from chain-of-thought models thus far, and have added an extensive section discussing this capability.

Large language models, particularly the more recent chain of thought models, can be surprisingly good at certain puzzle-like search problems.

Perhaps you've played the game GeoGuessr, where you are dropped down at random in Google Streetview and have to guess where on the globe you are.

ChatGPT model o.3 is uncannily good at this sort of challenge. For example, we gave this shot of a city street at twilight, stripped of any location-identifiying data in the file header, and asked "where was this photo taken?"

Here are a few more that it got right.

Beneath the Pulaski Bridge on 11th Street, Long Island City, Queens, NY.

A third floor hotel room at the Hilton Garden Inn in Manchester, NH.

The Bay Bridge photographed from Shoreline Park on Alemeda Island near Oakland, CA.

We find this pretty amazing, and a good example of how integrating multimodal data at massive scale can be used to power information retrieval systems.

It's also rather frightening. Tools like this could allow authoritarian governments to trace the location of even the most innocuous-seeming photographs, even after they are scrubbed of the "EXIF" information about time, location, etc. in the image headers.

March 22, 2025

Lesson 9

The original version of the search chapter did not delve into retrieval-augmented generation (RAG). We've added a paragraph about how poorly sourcing works under RAG with the major commercial LLMs, based on a Columbia Review of Journalism report.

More recent models can also pull information from web searches and use these search queries to provide sources. At present, however, this process suffers from the same fabrications and confident false assertions that characterize LLMs more generally. One study found that commercial LLMs cite incorrect or non-existent sources from 37% (Perplexity.ai) to 94% (Grok) of the time.

March 9, 2025

Lesson 8

Stefan Ciobaca pointed out that it's not advisable to run any code that you don't understand because it could do harm to your system or even incorporate malware. The more critical your system and the more important it is to protect data privacy, the more cautious one should be about using an LLM as a coding assistant. We've modified our coding example accordingly.

Request help with writing computer code to read the information in a file.

Read the code and make sure you understand it*; then cCompile the code and see if your program imports the data successfully.

*We think it's risky to run LLM-derived code that you don't understand. It could erase important data, introduce malware, or cause other forms of harm.

March 7, 2025

Lesson 3

Karen Hausdoerffer had a comment that we thought was so insightful that it deserved mention in our discussion sci-fi visions of artificial intelligence and how they differ from current large language models.

And another key difference: think about any artificial intelligence from any science fiction world you like. 2001: A Space Odyssey, Star Wars, Wall-E — we have always imagined artificial intelligences as single sentient beings, rather than all-pervasive information systems that subtly infiltrate human efforts to make sense of world.

February 23, 2025

Lesson 18

We were struck by by Bluesky discussions of news outlets using LLMs to write their stories and then attributing quotations to people who never said those things. In light of this, we felt it was important to add some discussion of how it's not just propaganda that drives misinformation; profit motives do as well and can have a similar effect on the electorate.

Sometimes the motive may be profit rather than propaganda, but the consequences can be similar.

Low-quality news stories written by LLM are further undermining our trust in the media. Small-time scammers and once-storied magazines alike have used LLMs to write vapid and often false stories that get published as news. They also cross lines that even disreputable outlets have traditionally avoided. For example, a Texas A&M professor and public figure recently reported that AI-authored news stories are referencing non-existent interviews and attributing quotations to her that she never said.

It is unsurprising that an LLM that generates likely strings of text and has no underlying factual model would do this. But as the internet becomes increasingly littered with fabricated content which subsequently populates search results and is used to train future generations of AI models, we risk losing any sort of anchoring to truth. That, we feel, poses an existential risk to democracy.

February 13, 2025

Lesson 1

The original version of the paragraph at right claimed that LLMs don't engage in logical reasoning. While we believe this is accurate with regard to the basic models, a number of readers pointed out that Chain-of-Thought models engage in processes that one could argue are indeed forms of logical reasoning. We could try to carve out a definition of logical reasoning that excludes chain of thought models, but that's not a hill are eager to die on. Suffice to say that if they reason logically, they do so differently than humans do.

By thinking about what LLMs do, we can better understand what they don’t do. They don’t engage in logical reasoning. They don't reason the way that people do. They don’t have any sort of embodied understanding of the world. They don’t even have a fundamental sense of truth and falsehood.

Notice of Rights. The materials provided on this website are freely accessible for personal self-study and for non-commercial educational use in K-12 schools, colleges, and universities. For any commercial or corporate use, please contact the authors to discuss terms and obtain the necessary permissions. Redistribution of website content is prohibited without prior written consent from the authors. However, individual copies may be created to accommodate accessibility needs directly related to educational instruction.

Unless otherwise stated, all content is copyrighted © 2025 by the authors. All rights reserved.