LESSON 10

The Human Art of Writing

Developed by Carl T. Bergstrom and Jevin D. West

"You let us use calculators — why not ChatGPT?" our students ask.

We explain that calculating and writing are entirely different kinds of activities.

Arithmetic is a mechanical process, ideally suited for automation. It has a single, right answer — and calculators find it, unerringly.

Writing is an art. It’s a personal, individual form of communication. There’s no single right way to say something—and different authors naturally express themselves in different ways.

Moreover, writing is a generative activity.

We write to figure out what we think.

When we write, we sharpen our ideas, refine our thinking, and engage in a creative activity that yields new insights only once pen hits paper.

If we offload the task of writing onto an AI, we lose the opportunity to think.

Then there is the matter of quality. While LLMs may generate passable prose, they are not good writers. Being a good writer requires far more than competent grammar. It requires voice. It requires humanity. It requires authenticity.

When we write, we share the way that we think. When we read, we get a glimpse of another mind. But when an LLM is the author, there is no mind there for a reader to glimpse.

Now consider style. Good writers exhibit a powerful sense of rhythm; LLMs do not. Good writers plan out paragraphs and sections like generals plan campaigns; LLMs ramble and natter. Good writers are surprising; LLMs are designed not to be. Good writers deploy novel metaphors; LLMs recycle hackneyed ones. A good writer expresses a unique perspective; LLMs gravitate to the least common denominator of the text on which they are trained.

Why not train LLMs solely on the work of great writers? In part, the median prose of several good writers is not necessarily good writing.

In part, the greatness of great writers stems from their originality. Think of the opening line of Gabriel Garcia Márquez's One Hundred Years of Solitude. Great writing is unexpected and unpredictable. As predictive text generators, LLMs will seldom if ever manage this. It's not what they are designed to do.

LLMs may ingest the writings of great authors, but their words are swamped amidst petabytes of overwrought fan fiction and guys mansplaining things on Reddit. It shows.

Many years later, as he faced the firing squad, Colonel Aureliano Buendía was to remember that distant afternoon when his father took him to discover ice.

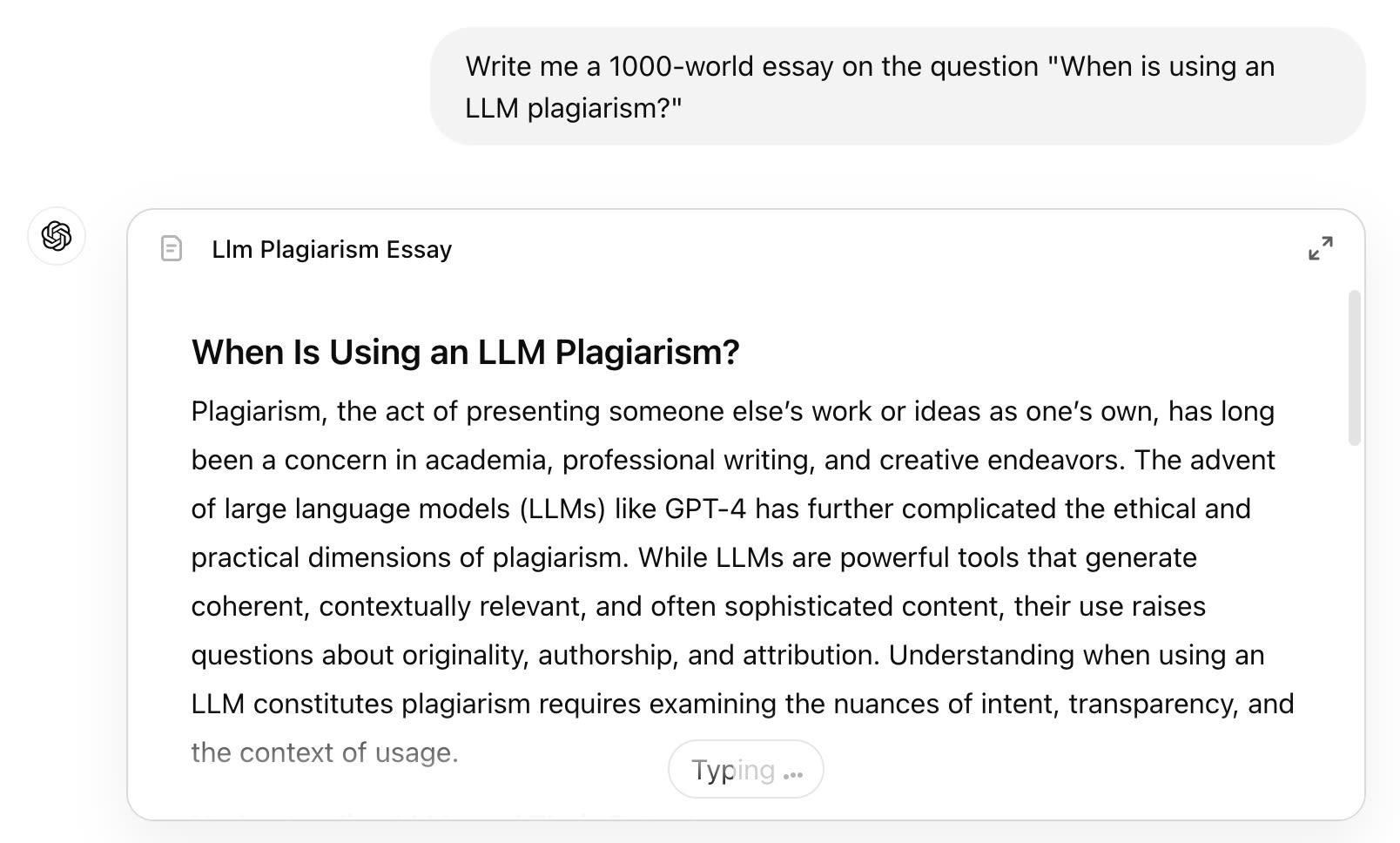

Then there is the issue of plagiarism

Oxford University defines plagiarism as follows:

"Presenting work or ideas from another source as your own, with or without consent of the original author, by incorporating it into your work without full acknowledgement."

If you hand off the entire process of developing ideas and drafting text to a large language model, without acknowledging that you have done so, this is unequivocally plagiarism. You are presenting ideas from another source as your own. Oxford concurs:

"All published and unpublished material, whether in manuscript, printed or electronic form, is covered under this definition, as is the use of material generated wholly or in part through use of artificial intelligence."

Critically: plagiarism is not just about stealing words or ideas from another person. It's about misrepresenting them as yours. The fact that a machine wrote the words does not make it ok.

A situation that would be considered plagiarism if it were presented as one's own work.

A situation that would be considered plagiarism if it were presented as one's own work.

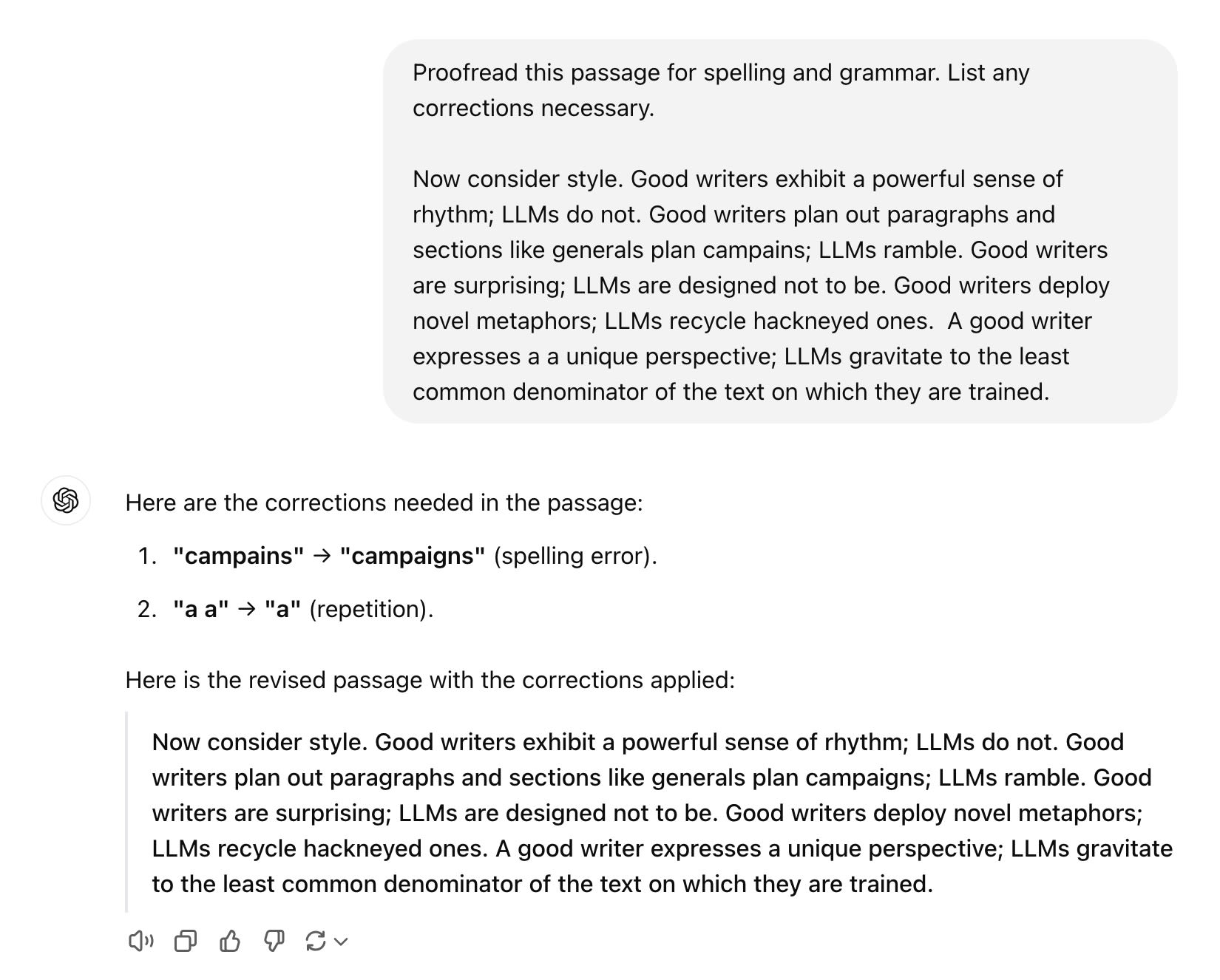

There are ways you can use an LLM ethically when you write.

It's not plagiarism to ask a friend to proofread your paper for spelling and grammar; we don't think it's plagiarism to ask an LLM to do this either.

A use that we consider not to be plagiarism: basic proofreading for spelling and grammar.

A use that we consider not to be plagiarism: basic proofreading for spelling and grammar.

But what about if you ask the LLM to rewrite a passage for you entirely? Now the ideas are yours, but you don't know where the specific words are coming from. Maybe they're a combination that no one has ever used before. Or maybe the machine has extracted a long string of text from its training set. You have no way of knowing—and that's dangerous.

The same holds for other tasks: asking an LLM to summarize a set of articles in a few paragraphs, having it convert a bullet-point outline into an essay, or even writing a verbal description to accompany an image or diagram.

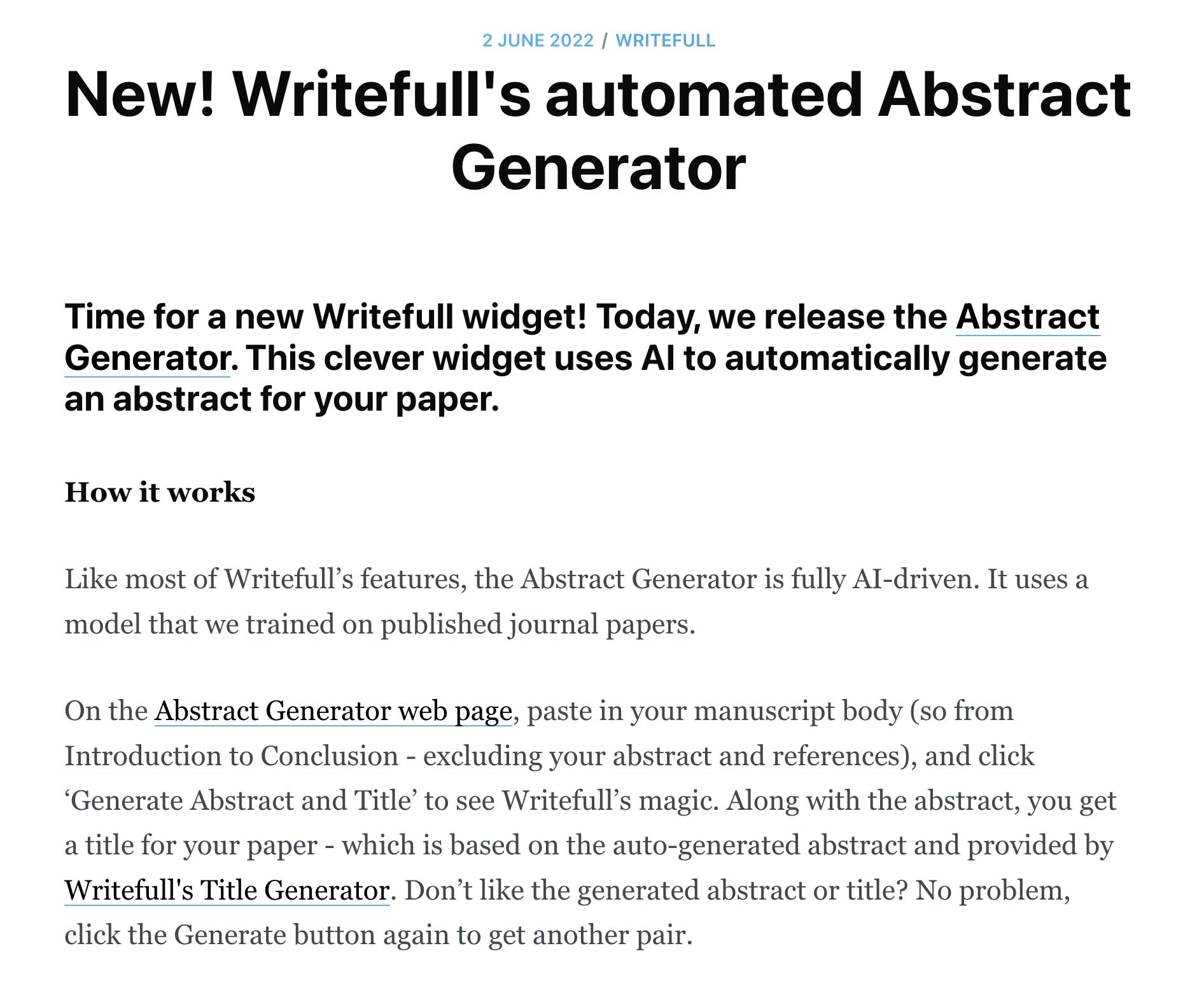

For example, the academic writing package Writefull uses an AI model trained on journal papers to suggest a title and write an abstract for your paper. You simply give it the paper text, and it does the rest. This seems unethical to us.

And ethics aside, it's a terrible idea on practical grounds. You have no way of knowing whether its words are borrowed directly from another source. And if they are, you've plagiarized the part of your paper that is the most visible—you've plagiarized precisely where you are most likely to get caught.

We think this is a bad idea. Source: blog.writefull.com

We think this is a bad idea. Source: blog.writefull.com

PRINCIPLE

LLMs don’t replace the art of writing the way that calculators replace the task of doing arithmetic. Their prose is grammatically correct, but mediocre at best. When we allow LLMs to write for us, we lose the opportunity to think. When we hand off our writing to LLMs, we are giving up our own agency in the world.

DISCUSSION

Suppose that in a decade, machines take over much of the task of writing and humans use them as tools to get ideas onto paper quickly. What is gained? What is lost?

VIDEO

Coming Soon.

NEXT: Transforming Education?