LESSON 8

Poisonous Mushrooms and Doggie Passports

Developed by Carl T. Bergstrom and Jevin D. West

When experts trust AI models to make high-stakes decisions, bad things happen. One study showed that the diagnostic ability of radiologists plummets when they are working in concert with an inaccurate AI.

Non-experts are even more vulnerable.

A number of AI-generated and error-ridden books about mushroom identification have been found on Amazon.com. In a viral Reddit thread, one man claimed that one family ended up in the hospital with severe poisoning after buying and using one of these books.

In another incident, a woman asked a veterinary chatbot what to do when her dog was suffering from diarrhea. The AI recommended euthanasia, perhaps unnecessarily, and suggested local vets who could end the dog's life. The woman complied. A further twist? This story only came to light when the CEO of this veterinary telehealth company thought his machine’s persuasiveness and “humanity” was something to boast about at an industry association lecture.

Photo by Jimi Malmberg on Unsplash

Photo by Jimi Malmberg on Unsplash

The examples above involve high-stakes decisions where the machine's answers could not be readily verified. Such cases are the worst situations in which to rely upon an AI.

LLMs can be very useful for low-stakes matters, especially when you can easily and safely check whether the LLM is correct.

Suppose instead of euthanizing your dog, you need to travel with it from the US to Canada.

Does your pup need health records?

What sort?

Does it need a passport?

Are doggy passports even a thing?

Who issues them?

The State Department?

The Department of Agriculture?

Ask an LLM how to get started—and ask it for links to the appropriate government sources.

You can’t trust it to be right, but once you follow its suggestions to those websites, you can see if you are on the right track. When we tried it, ChatGPT steered us to this site, which provided just the information we needed from an authoritative source: the Canadian government.

Photo: Carl Bergstrom

Photo: Carl Bergstrom

Here are some other examples of good ways to use an LLM.

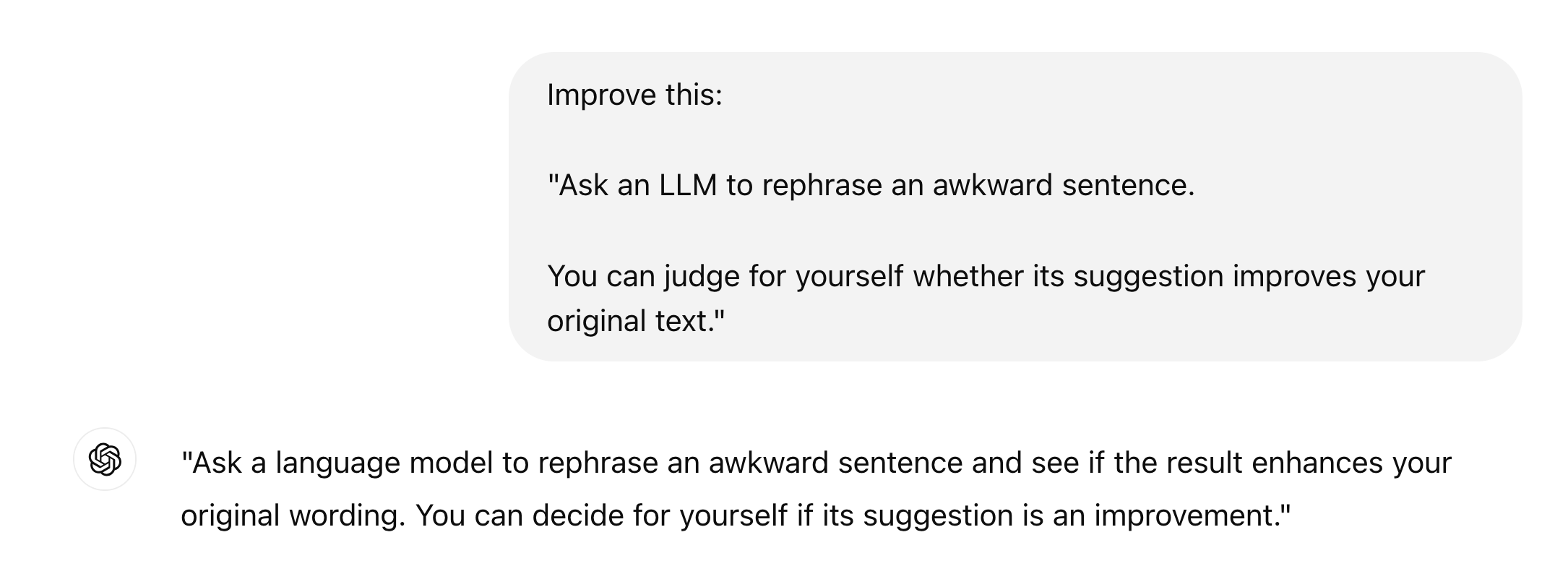

Ask an LLM how to rephrase an awkward sentence.

You can judge for yourself whether its suggestion improves your original text*.

*It often won't, as in the example here.

ChatGPT 4.o query 1/3/2025

ChatGPT 4.o query 1/3/2025

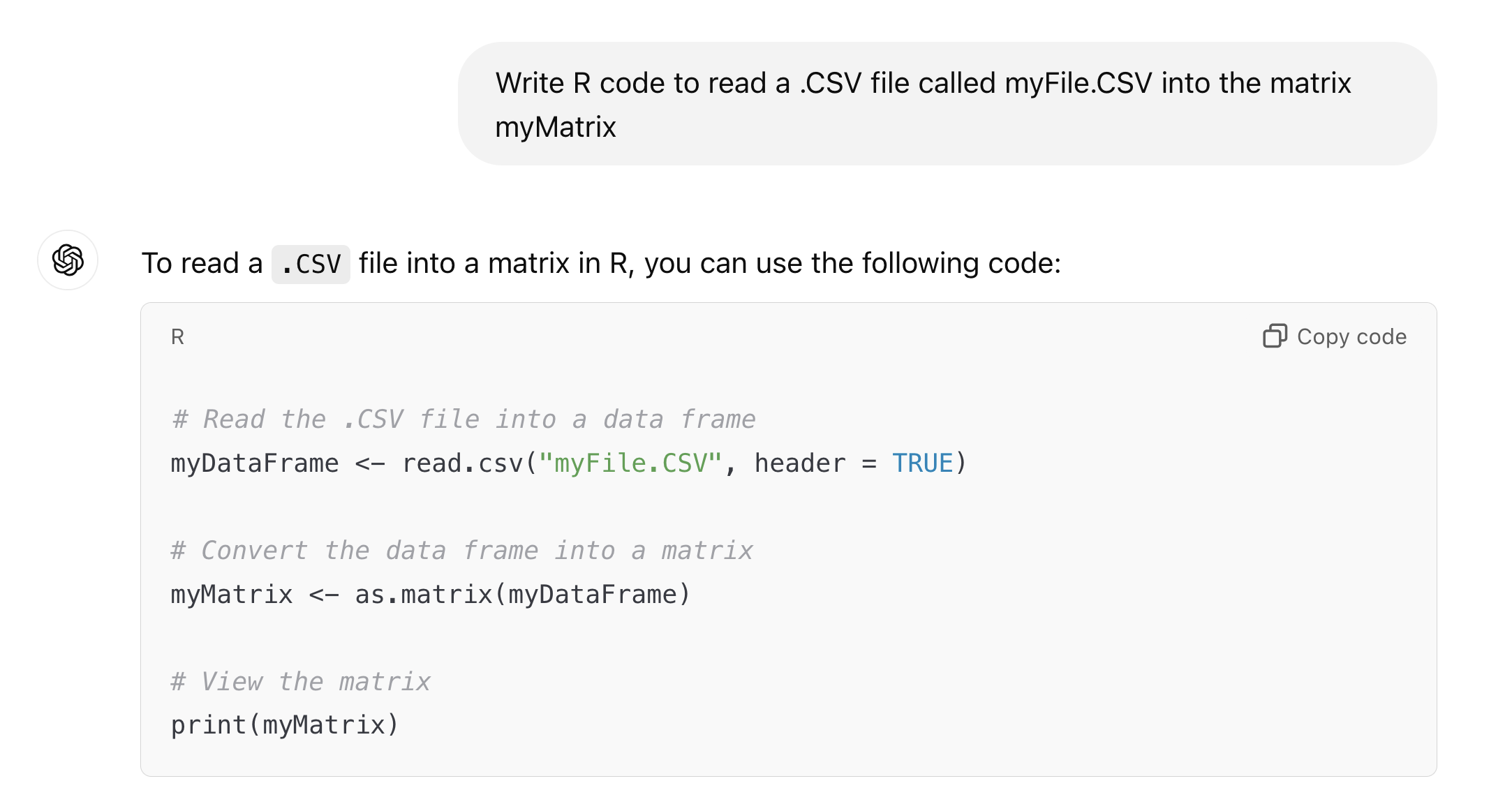

Request help with writing computer code to read the information in a file.

Read the code and make sure you understand it*; then compile the code and see if your program imports the data successfully.

*We think it's risky to run LLM-derived code that you don't understand. It could erase important data, introduce malware, or cause other forms of harm.

ChatGPT 4.o query 12/15/2024

ChatGPT 4.o query 12/15/2024

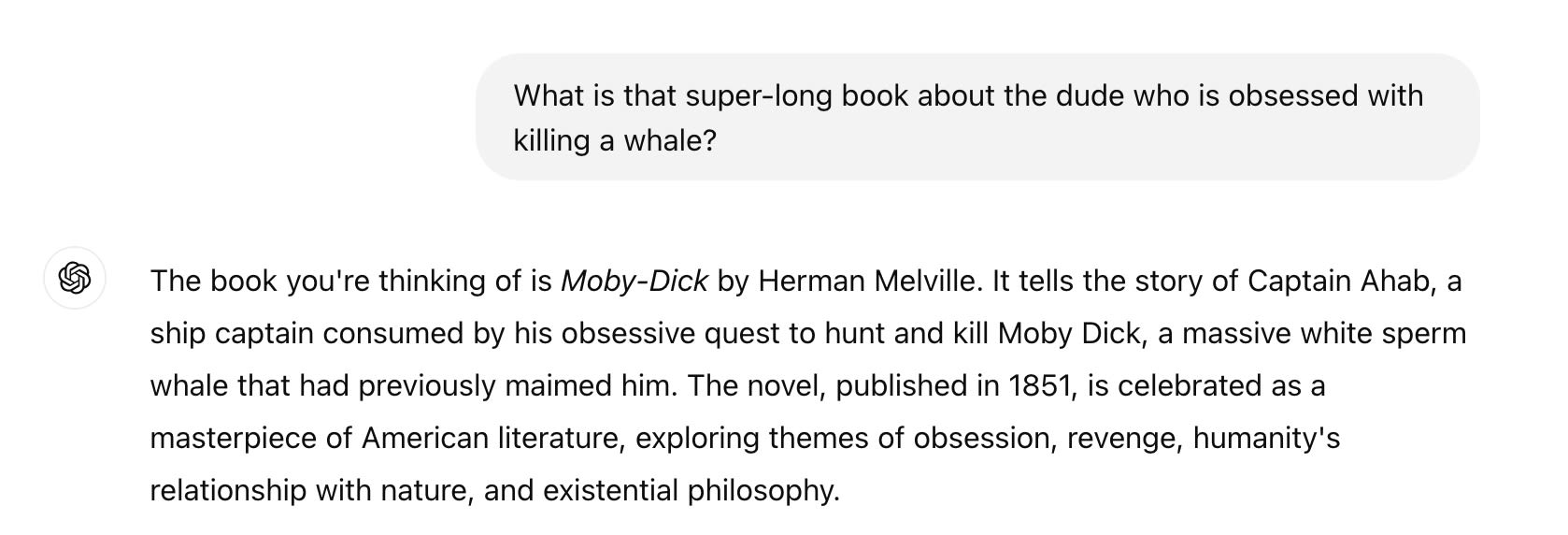

Sometimes a fact is on the tip of your tongue, but you just can't remember it without a bit of help.

Ask an LLM to remind you what is the name of that book about the guy who was trying to kill the whale. If it answers something other than Moby Dick, you'll know it's wrong.

ChatGPT 4.o query 12/15/2024

ChatGPT 4.o query 12/15/2024

Another place where large language models shine is in knowing what people tend to write or say.

This makes perfect sense; they've been trained on huge volumes of text, and engineered to produce likely utterances given what they've seen before.

For example, we wanted to know whether the appropriate expression for true randomness in the world was “aleatory uncertainty” or “aleatoric uncertainty.” ChatGPT provided the answer. (It's "aleatory").

A friend wanted to know what was the euphemism for a stock market crash that literally just means variability. Again, ChatGPT answered right away. (It's "volatility") .

These may seem like trivial matters, but think about the value for speech recognition. By coupling basic acoustic technology with a statistical model of what people are likely to say, your phone can do a much better job of transcribing your speech.

Or consider other forms of assistive technology. In 2012, we heard physicist Stephen Hawking give a public lecture. He had written the talk itself in advance, but afterward he graciously took questions from the audience. Using the technology of the day, it took him several minutes to compose a two-sentence answer to each query. Think about how much faster he could have communicated with audiences and fellow researchers with the help of a modern predictive text model!

The point is that LLMs tend to generate common linguistic expressions. For speech recognition, assistive technology, and similar uses, this is great. By definition, most things that people say are things that people frequently say — and LLMs are very good at anticipating these.

These applications don't require the AI to understand anything at all. They simply lean in to LLMs' capabilities as autocomplete systems.

PRINCIPLE

If answers need to be accurate, asking an LLM is risky—and the less expertise you have in the subject at hand, the riskier it is. Don’t let LLMs tell you what to do. But they can be very useful to tell you what to try, if you can easily and safely test their suggestions.

DISCUSSION

Sometimes it's clear whether a question is a poisonous mushroom question or a doggie passport question. What are some situations that are more ambiguous? How do you decide what to do in these cases?

VIDEO

Coming Soon.

NEXT: Blue Links Matter