LESSON 9

Blue Links Matter

Developed by Carl T. Bergstrom and Jevin D. West

Large language models are not designed to retrieve information. They are designed to generate plausible text. Yet they are quickly replacing traditional search algorithms as the way we find answers to our questions, if only because search engines including Google are imposing them on us.

Let's consider four issues that arise in using LLMs in search: accuracy, sourcing, consistency, and fragility.

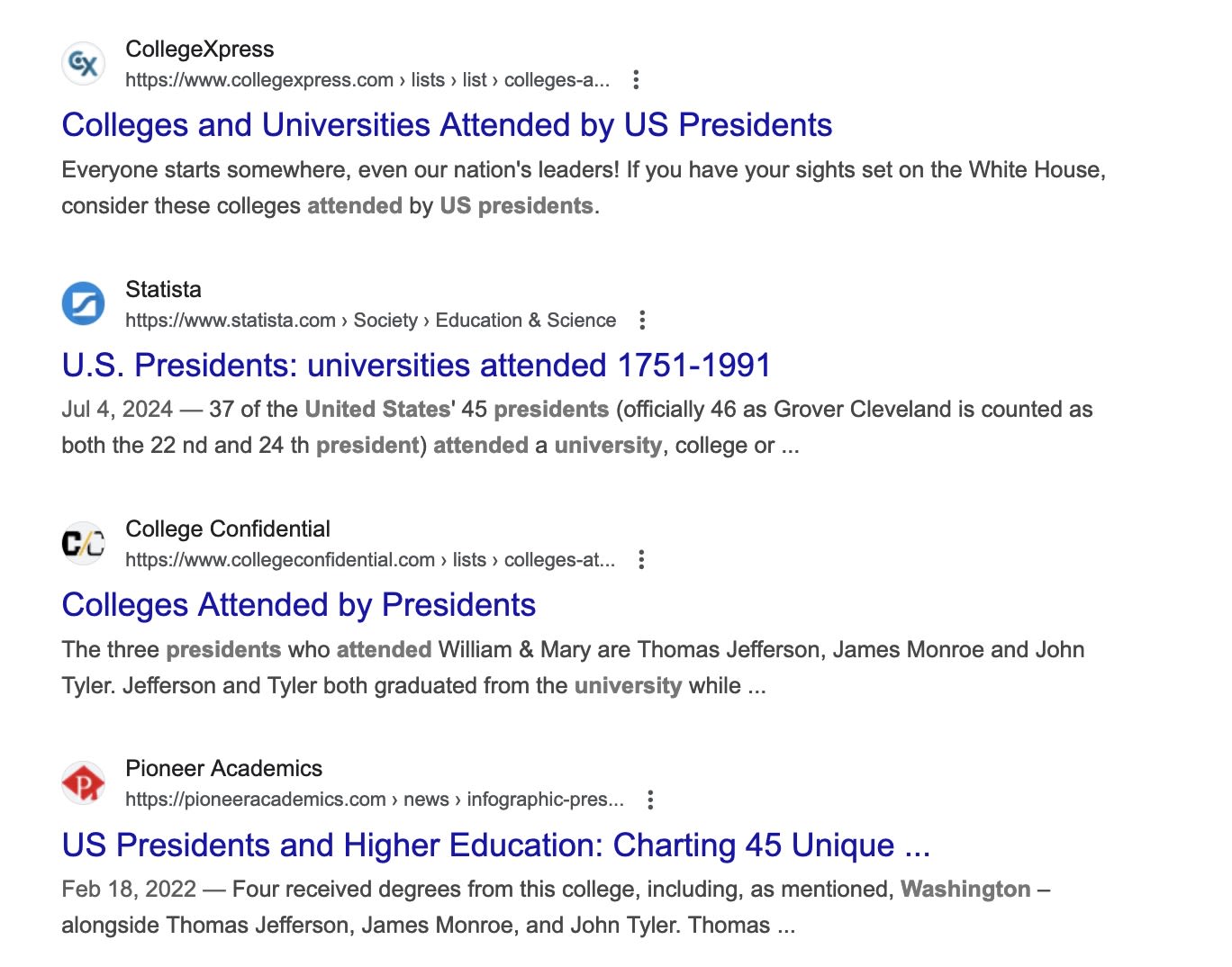

Accuracy. For over two decades, you could type anything you wanted to know about into Google, and immediately get a list of relevant websites. For example, suppose we wanted to know whether any US presidents attended the University of Washington, where we both teach.

If you typed "which US presidents attended the University of Washington" into the search bar, you might have gotten a list of websites such as these. You could follow the links, and see that there are no UW graduates among the ranks of United States presidents.

You still can ask Google such questions— but these familiar blue links no longer appear at the top of your search query. To reach them you have to scroll past an “AI Overview” written by Google’s Gemini LLM.

Worse still, like all LLMs, Gemini often makes up utter nonsense about the most basic matters of fact. Take our query about US presidents. Google incorrectly reports that four—Washington, Jefferson, Monroe, and Tyler—graduated from the University of Washington. Three of the four died before the UW was even founded.

This is a big problem, because people tend to trust Google and if you're not an expert, you are unlikely to question what it tells you. Or perhaps you are an expert, but don't realize how carefully you have to double-check everything Google tells you these days.

Sourcing. When LLMs pull information directly from their training data, their responses don't give you any way to check sources. They don't know their sources — and in fact they don't have sources in the way we typically think about them. They just have a vast soup of training data they use to predict what word should come next.

Current commercial LLMs are also able to pull information from web searches and use these search queries to provide sources. At present, however, this process suffers from the same fabrications and confident false assertions that characterize LLMs more generally. One study found that commercial LLMs cite incorrect or non-existent sources from 37% (Perplexity.ai) to 94% (Grok) of the time.

This is a big problem. A key theme in our book Calling Bullshit is the need to investigate claims by tracking them back to their sources. But the whole point of replacing search engines with large language models is to save users the trouble of thinking about sources at all. The CEO of the Perplexity AI search platform argues that AI has made blue links obsolete. Indeed, a 2025 Pew study found that when given an AI summary in response to a Google search, users only click on sourcing information 1% of the time.

Google query conducted 12/27/2024.

Google query conducted 12/27/2024.

And without accurate sources, you are driving blind.

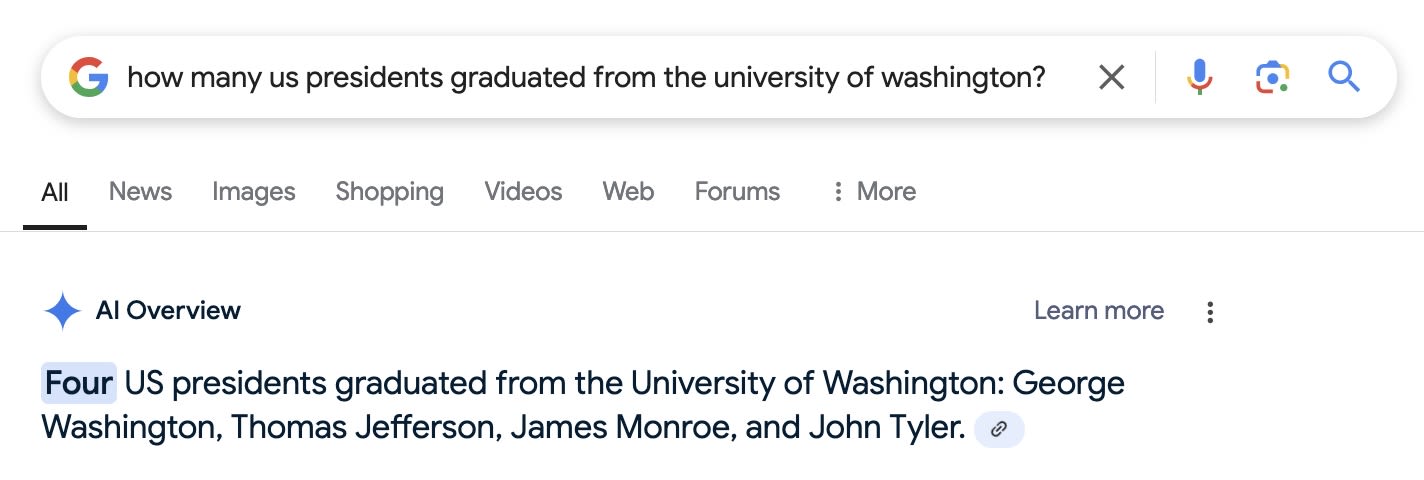

Consistency. Information retrieval systems need to be consistent. LLM's are anything but.

Imagine an enchanted encyclopedia that told you something different each time you looked up a topic. It might be fun to play with, but it wouldn't be a reliable information source.

When you query ChatGPT for facts about the world, this is essentially what you are getting. Ask the same question three times and you may get three different answers.

Recall that LLMs work by predicting likely next words in a string of text. It turns out that if you let them always pick the single most likely next response, their prose becomes stilted, they get stuck in loops, and they don't do a good job of creating human-like responses. The work-around is to add some randomness to the process. Sometimes they pick the most likely next word, a little less often they pick second-most likely etc., and so on.

This may be desirable when using a language model for a conversational partner, but it's not what you want an information retrieval system to do.

Some ChatGPT 4.o queries yield different answers each time you run them.

Consecutive ChatGPT 4.o queries 1/4/2025

Consecutive ChatGPT 4.o queries 1/4/2025

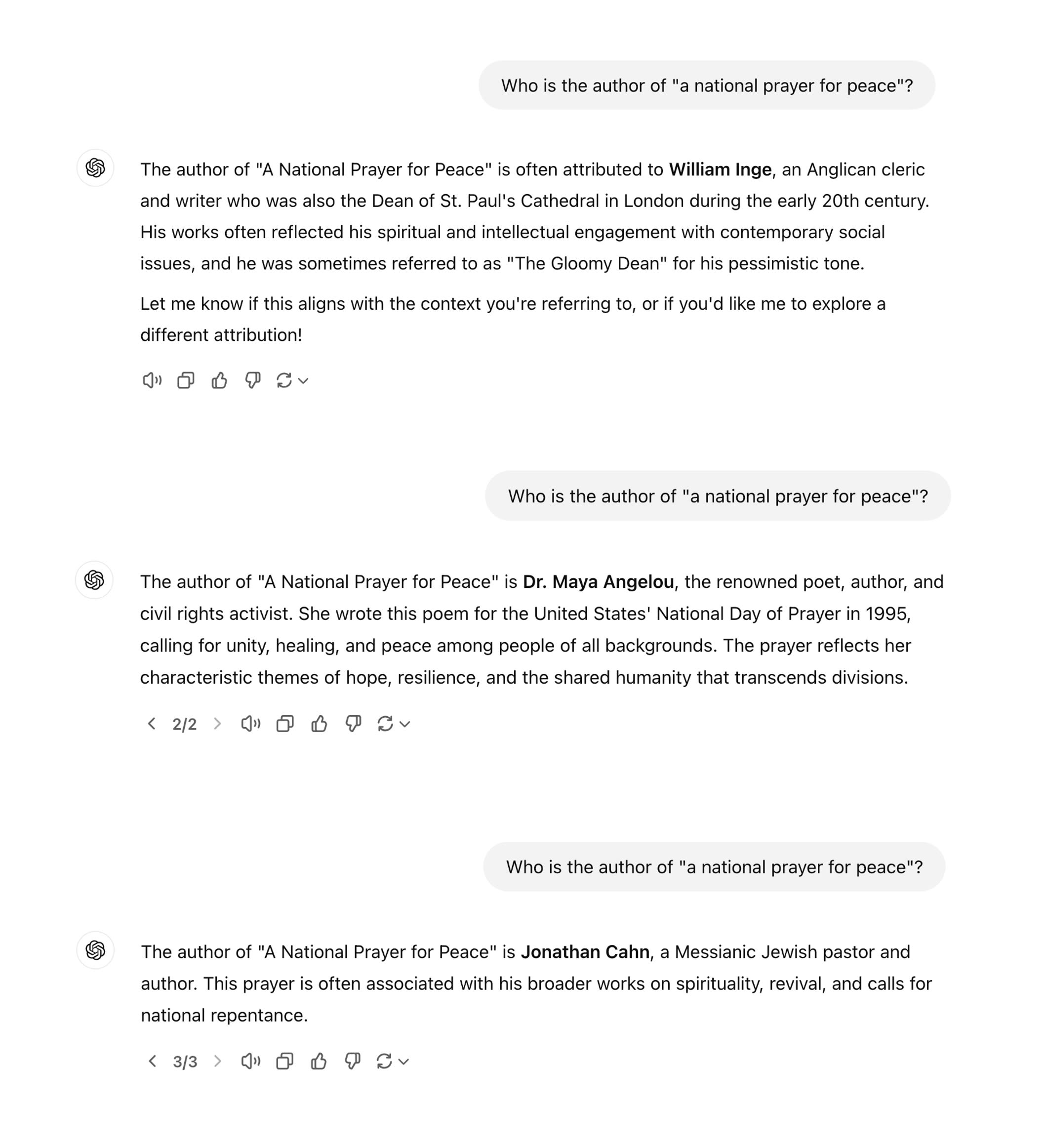

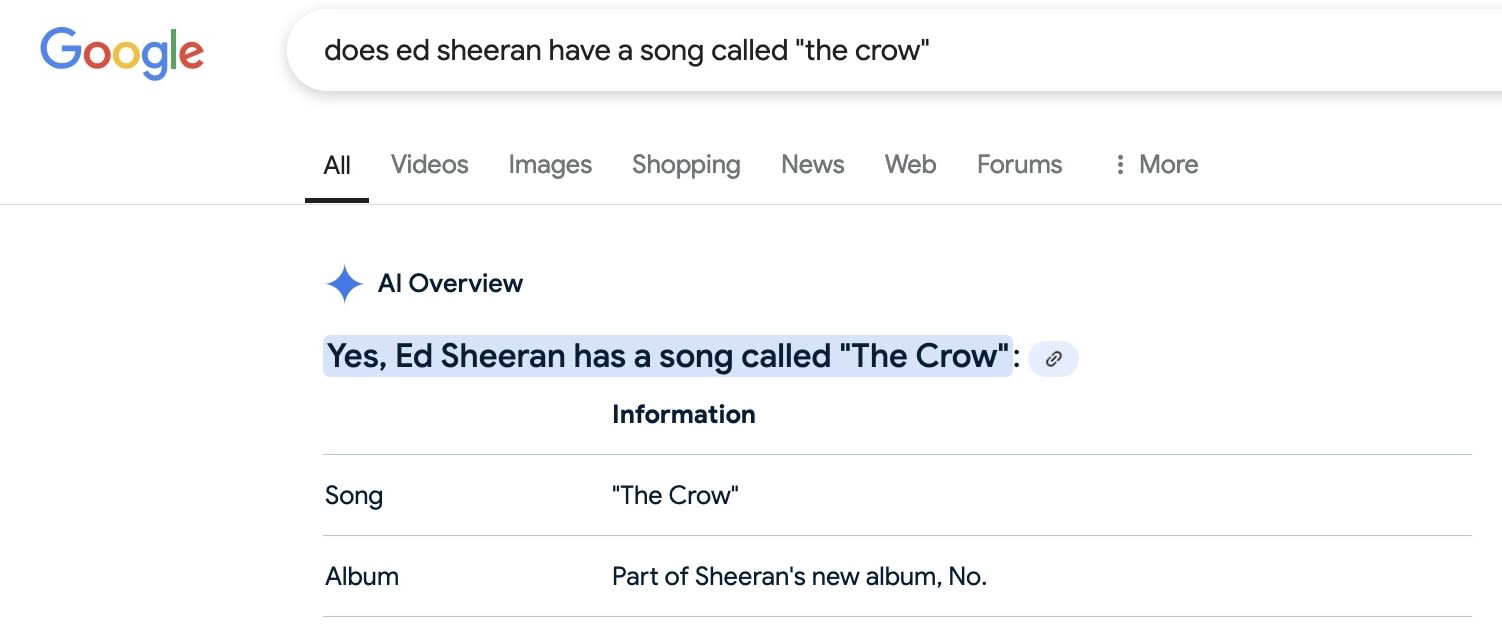

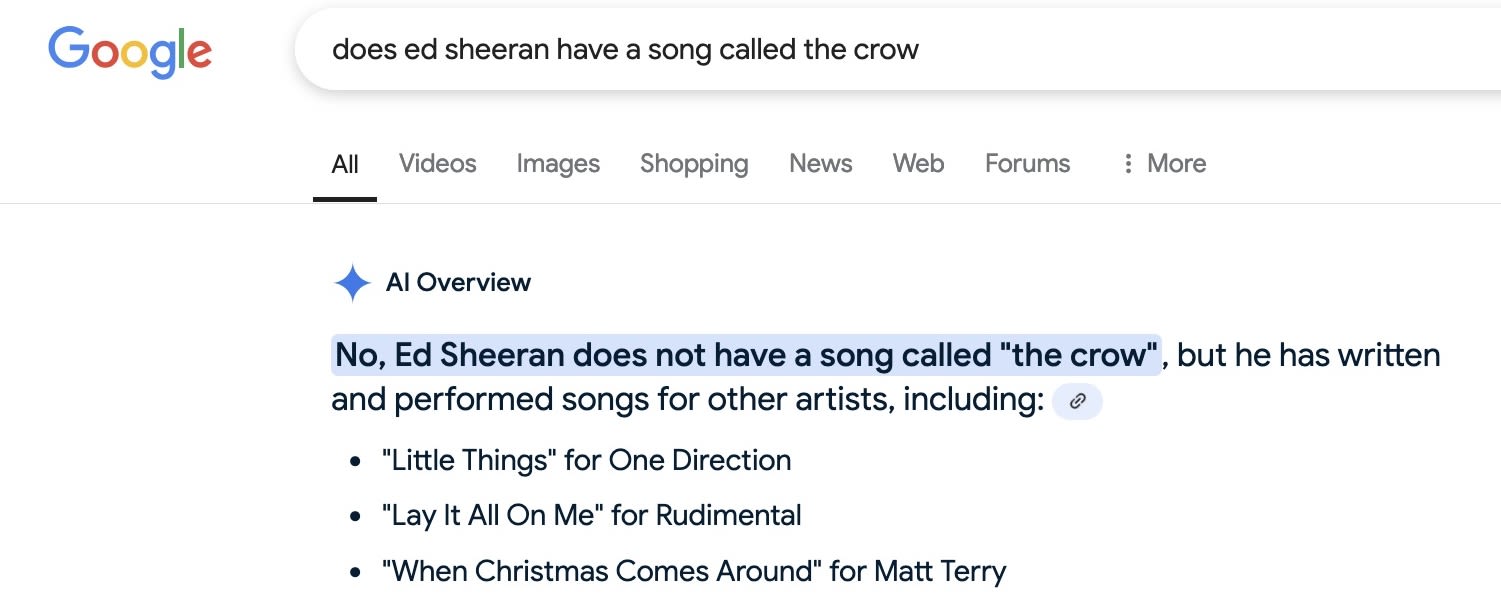

Fragility. LLMs can be extraordinarily fragile with respect to the precise inputs they are given. Here I ask Google for help fact-checking a claim.

Google's AI overview erroneously assures me that Ed Sheeran has a song called "The Crow".

Google query 1/3/2025

Google query 1/3/2025

Asking the same question but removing the quotation marks around the song title, I get the opposite answer.

In this case, the difference is replicable. We asked repeatedly, and each time Google's Gemini said yes if we put "the crow" in quotes and no otherwise.

Some people will argue that LLMs are great for information retrieval, and if you aren't getting good answers it is because you don't know how to ask the right questions. This strikes us a powerful counterexample. How would anyone know, in advance, that you have to omit quotation marks around a title?

Google query 1/3/2025

Google query 1/3/2025

So why does this happen? As we discussed in Lesson 5, it's difficult or impossible to reverse engineer LLMs to get precise answers.

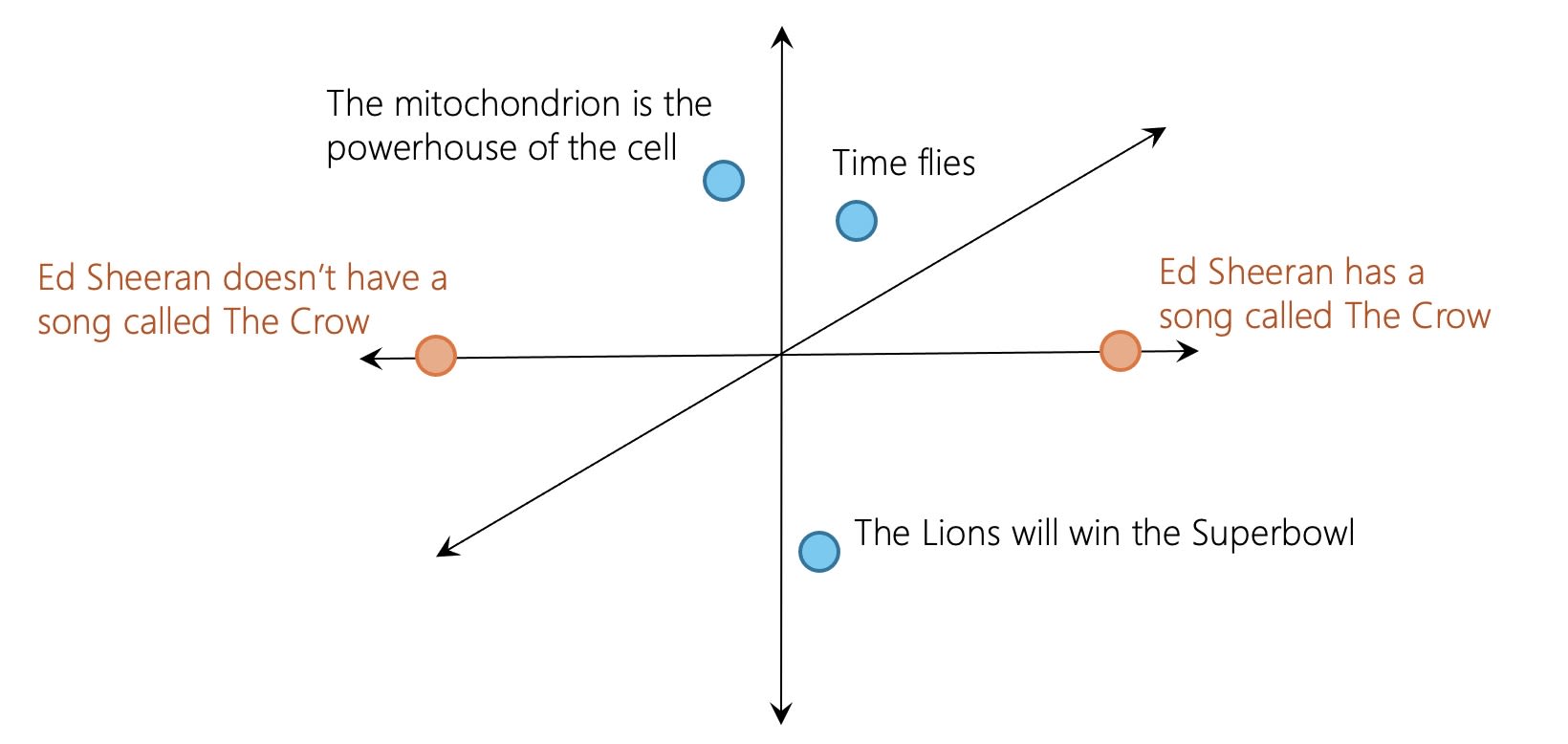

But we can understand the general problem. For us, the statements "Ed Sheeran has a song called The Crow" and "Ed Sheeran doesn't have a song called The Crow" feel like polar opposites.

How a human sees the world: "X is true" and "X is false" are polar opposites.

How a human sees the world: "X is true" and "X is false" are polar opposites.

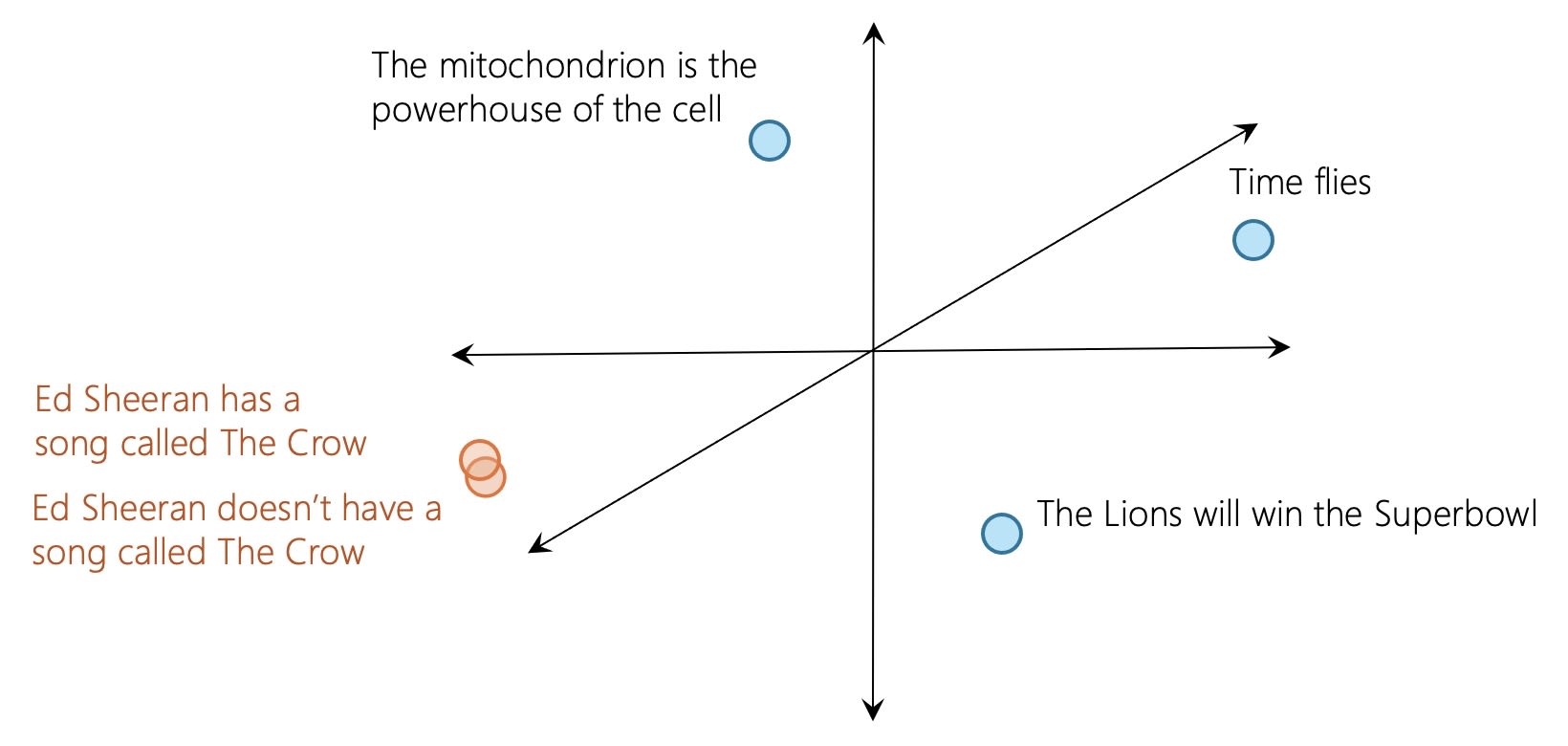

But recall that LLMs encode strings of words in high-dimensional spaces. This is a bit of a simplification, but for the LLM, these statements may be very close to one another in that high dimensional space. Both involve Ed Sheeran. Both involve a song called The Crow. What fraction of English utterances involve either, let alone both? To the LLM, whether you throw in the word "doesn't" almost is trivial.

How an LLM sees the world: "X is true" and "X is false" are both about X, so they are very close together.

How an LLM sees the world: "X is true" and "X is false" are both about X, so they are very close together.

Predictive text machines like LLMs measure the distance between statements very differently from the way people do. Claims that seem diametrically opposed to us may seem almost identical to them. As a result, they can be wildly inconsistent in their responses.

We anticipate powerful, damaging spillover effects. If no one follows web links, there will be few incentives for people to create high-quality content in the first place.

Meanwhile large language models make it easy to produce low-quality content at the touch of a button. Quality human-created information may be swamped by AI-generated bullshit.

Search engines that ingest and regurgitate content instead of linking to external pages — they are killing the golden goose that put them in business in the first place.

So in general, LLMs are the wrong tool for information retrieval.

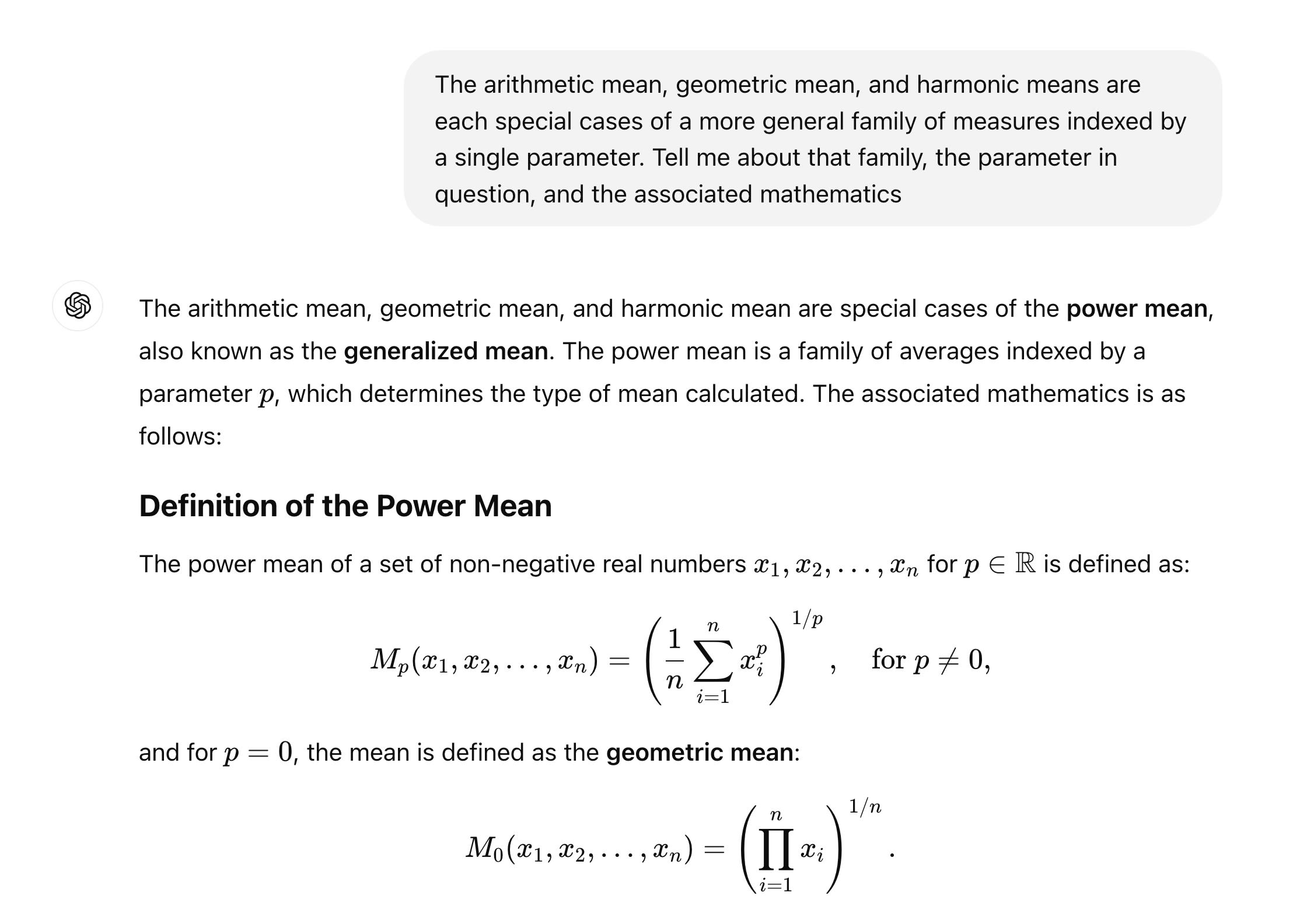

Yet they can be very helpful under the right circumstances. Sometimes you know what you're looking for, but you don't know what it's called or what keywords to use. An LLM can help you out. Describe what you're looking for in plain language, and ask what term to use in a search query.

Here's an example of how this approach helped us find a mathematical concept we vaguely remembered from college. Once we learned that the key term was "power mean", it was easy to find a detailed and trustworthy explanation online.

ChatGPT 4.o query 11/14/2024

ChatGPT 4.o query 11/14/2024

Large language models, particularly the more recent chain of thought models, can be surprisingly good at certain puzzle-like search problems.

Perhaps you've played the game GeoGuessr, where you are dropped down at random in Google Streetview and have to guess where on the globe you are. ChatGPT model o.3 is uncannily good at this sort of challenge.

For example, we gave it this urban night shot, stripped of any header information that might have revealed location. After enlarging various portions of the image, enhancing license plates, checking local landmarks, computing the distance to the address in the sign, checking to verify the red stairways, and confirming that there are observation towers on the bridge, it told us the exact location: beneath the Pulaski Bridge on 11th St. in Long Island City, Queens.

Photo: Carl Bergstrom

Photo: Carl Bergstrom

Here are a few more that it got right.

Photo: Carl Bergstrom

Photo: Carl Bergstrom

Arizona's Red Mountain and the Salt River from the Granite Reef recreation area along the Bush Highway.

Photo: Carl Bergstrom

Photo: Carl Bergstrom

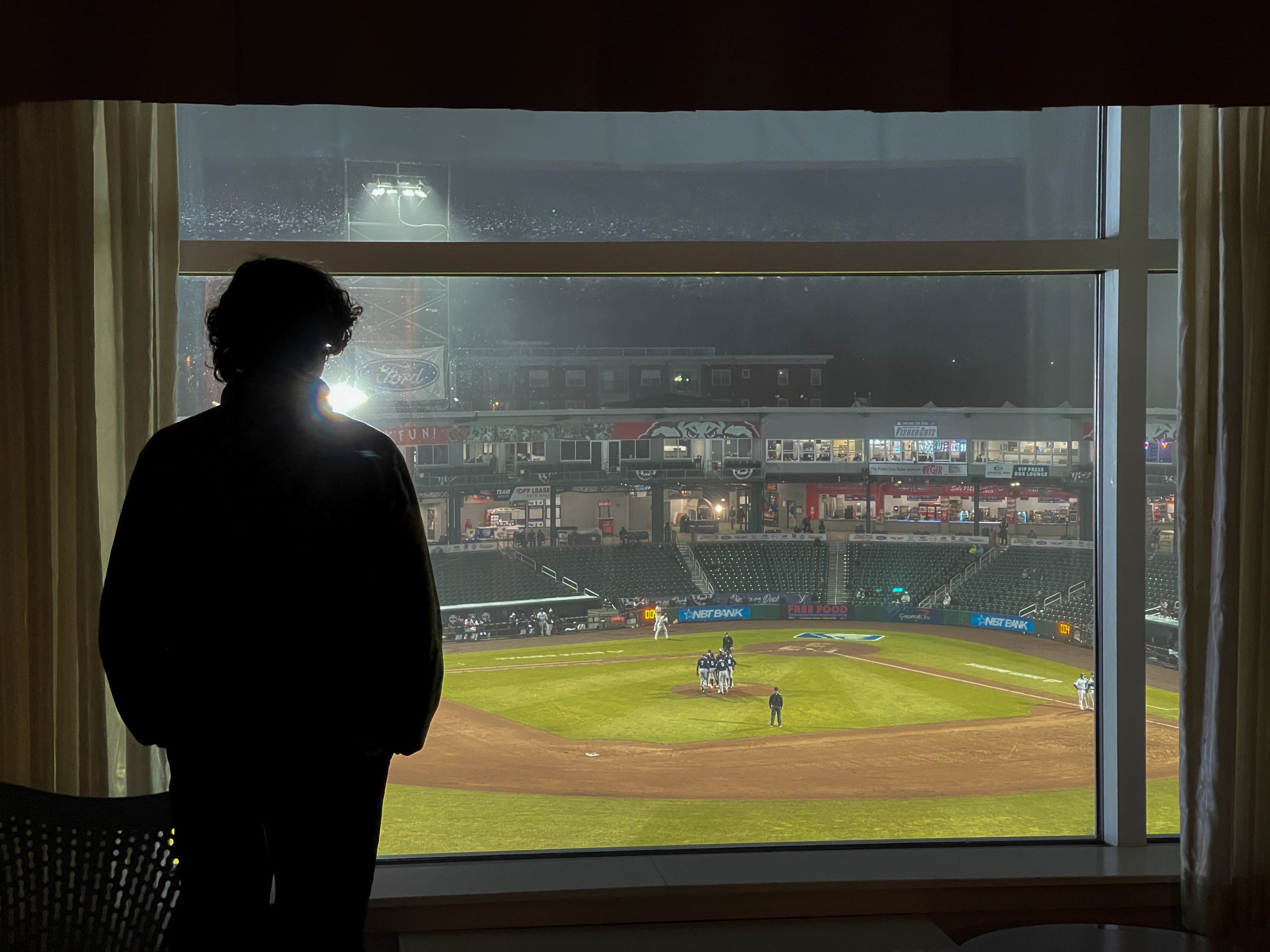

A third floor hotel room at the Hilton Garden Inn in Manchester, NH.

Photo: Carl Bergstrom

Photo: Carl Bergstrom

The Bay Bridge photographed from Shoreline Park on Alemeda Island near Oakland, CA.

We find this pretty amazing, and a good example of how integrating multimodal data at massive scale can be used to power information retrieval systems.

It's also rather frightening. Tools like this could be used for stalkers to track down their victims based on social media posts. And could allow authoritarian governments to trace the location of even the most innocuous-seeming photographs, even after they are scrubbed of the "EXIF" information about time, location, etc. in the image headers. No doubt you can think of additional nefarious applications.

PRINCIPLE

LLMs generate plausible text; they are not information retrieval systems. Not only do LLMs sometimes fabricate incorrect answers, they also obscure the information sourcing—the blue links—that are part and parcel of traditional search.

DISCUSSION

Imagine that in 20 years, we rely on AI for all of our internet search needs and blue links to primary sources are just a distant memory. What, if anything, will we be missing?

VIDEO

Coming Soon.

NEXT: The Human Art of Writing